Autonomous Navigation with DRL on TurtleBot3/4 (under changes)

Under the mentorship of Professor McCarrin, this involves developing on navigation and obstacle avoidance for the TurtleBot3 and TurtleBot4.

Welcome to the project hub for our exploration of Deep Reinforcement Learning (DRL) in autonomous navigation using the TurtleBot.

This research has evolved over the semesters as we explored new possibilities that aligned with the team’s growing interests and desires:

- Fall 2023: Focused on surface-level autonomous vehicles, introduced to ROS and Gazebo

- Spring 2024: Acquired TurtleBot 3 Burger, learned about SLAM and the possibility of fiducial markers

- Fall 2024: Shifted focus to deep reinforcement learning for obstacle avoidance, primarily based on Tomas van Rietbergen’s work

- Spring 2025: Acquired TurtleBot 4, considering continuing work on DRL and sensor fusion

Our work has two key objectives: obstacle avoidance and dynamic target following. By integrating DRL with PyTorch, we aim to improve the robot’s decision-making in real-time scenarios.

Navigation

About the Project

Problem Statement

Navigating dynamic and cluttered environments autonomously remains a challenging problem for robots.

Objectives

- Implement DRL for real-time obstacle avoidance by van Rietbergen T.L for LD08 sensor.

- Enable dynamic target following while maintaining the first feature.

Motivation

This research addresses critical challenges in robotics and contributes to practical applications in service robots, autonomous vehicles in warehouses, and in other areas.

Research Methodology

Robot Platform

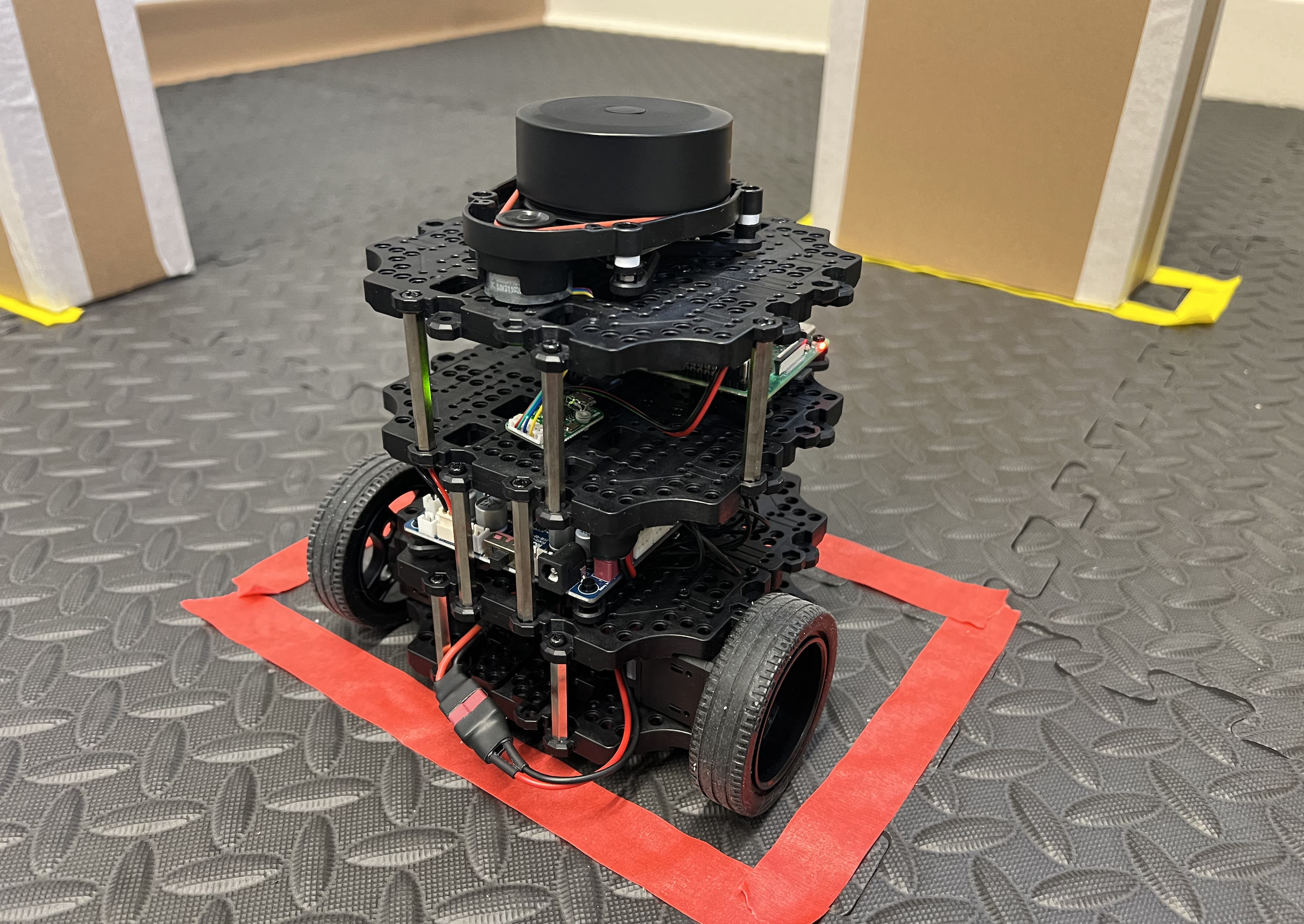

-

TurtleBot3 Burger: Compact, ROS-compatible, ideal for research and education.

-

TurtleBo4: Better documentation, great capabilities in terms of sensoring (RGBD Camera, infrared sensors, etc), and built-in features.

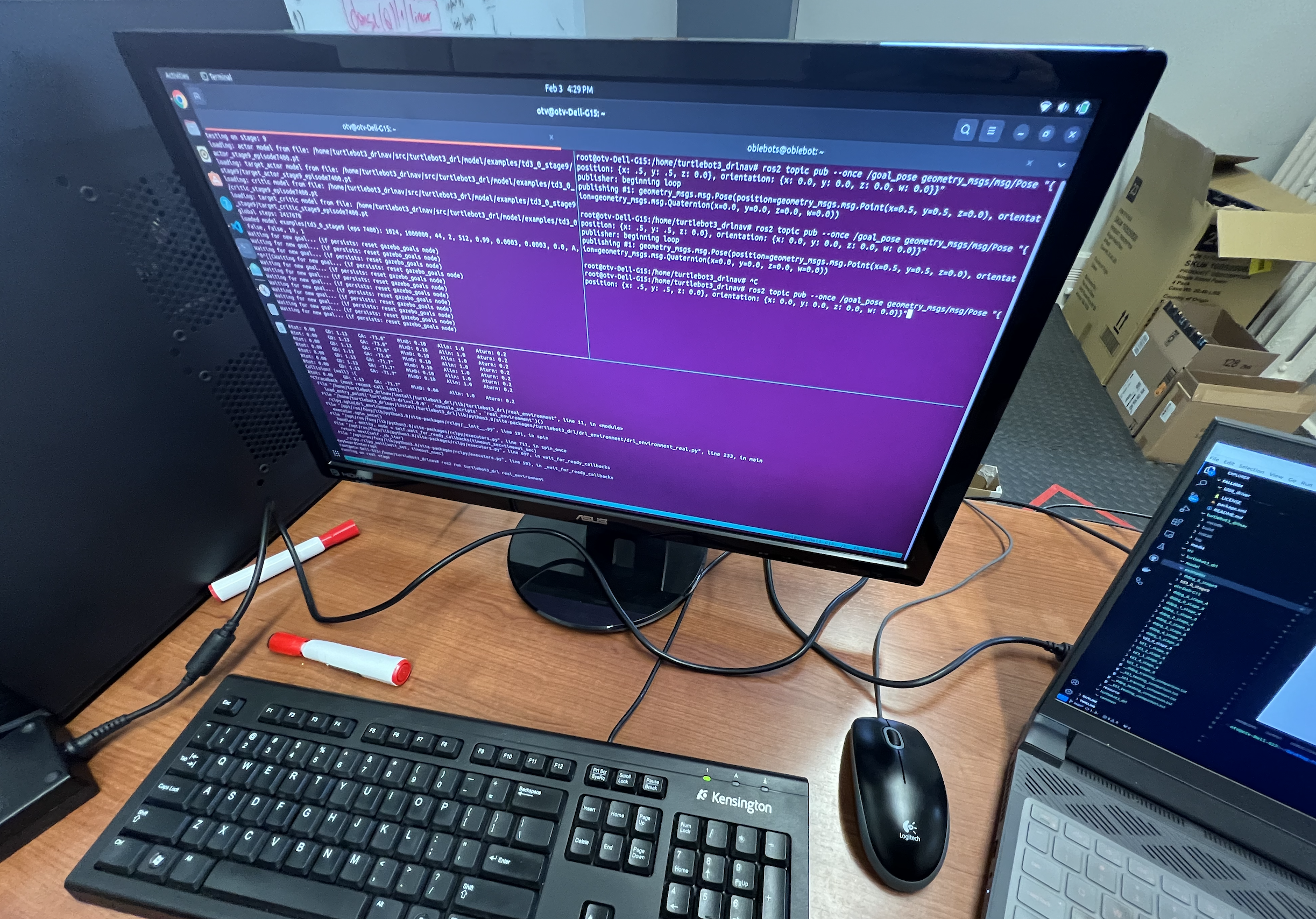

Software Stack

- ROS: Robot Operating System for communication and control.

- PyTorch: Framework for developing DRL models.

- Gazebo: Simulation environment for testing and training.

Approach

- Simulations: Training DRL models in controlled virtual environments.

- Real-world Testing: Refining models on the physical TurtleBot 3 and 4.

Challenges

- Overcoming outdated documentation for the TurtleBot 3 and driver.

- Adapting DRL techniques for hardware limitations.

- Integrating multiple robots (TurtleBot 3 and 4).

Results

In progress.

Demonstrations

In progress.

Metrics

- Success rates for navigation tasks.

- Training times and resource efficiency.

Resources

Codebase

-

Our GitHub Repository: Our modifications and models.

-

Original GitHub Repository: van Rietbergen T.L, Master Thesis at UT Delft.

Datasets

- Details about simulation data and real-world test results (in progress).

Documentation

- Tutorials for setting up the TurtleBot3 and replicating our experiments (in progress).

Team

- Prof. Michael R. McCarrin (Advisor)

- Otavio Paz Nascimento (Student Researcher - 2023-Present)

- Rosie McKusick (Student Researcher - 2023)

- Eliza Bomfim Guimaraes (Student Researcher - 2023)